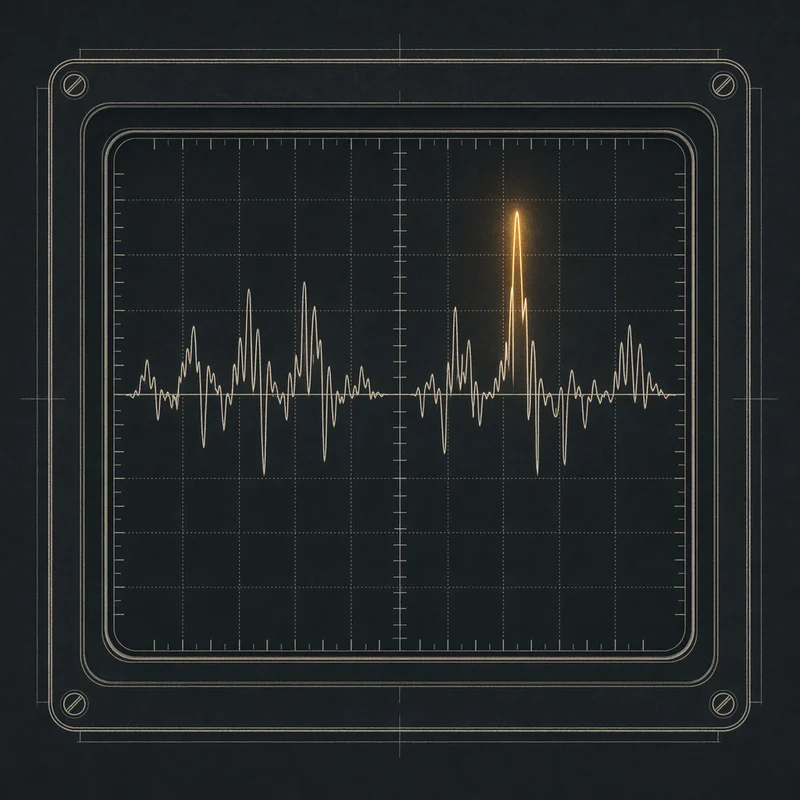

Voice Print

Media processing & person fingerprinting hub

Decades of personal audio and video were a black box. I wanted to ask: who said what, when, and to whom — and get a real answer.

Twenty years of audio and video sitting on drives I never opened — a VHS archive ripped years ago, Plaud recorder clips, old Google Voice voicemails, iMessage attachments, meeting recordings, YouTube uploads, Facebook videos, Instagram stories, Apple Photos, Google Drive dumps. All of it was a black box. I wanted to ask one question — who said what, when, and to whom — and the answer was always “go remember, I guess.”

The pipeline ingests from 10+ sources and processes everything the same way. Whisper transcribes, Replicate’s diarization service splits speakers (with local pyannote as a fallback), WeSpeaker generates a 256-dim voice embedding for each segment, and InsightFace runs on video frames to extract 512-dim face embeddings. Everything lands in Postgres with pgvector so person matching is a similarity search, not a string compare. An auto-scaling worker pool of 1–8 workers coordinates through Nexus and leans on Forge for the model endpoints. 18 UI routes on top — dashboard, upload queue, processing status, persons, review, transcripts, search, clusters, timeline, spam, clips, identify.

The real unlock isn’t any single feature, it’s cross-temporal search. “Find every time my grandmother appears in a clip” becomes a query, not an archaeology project. “Find every meeting where we talked about the Florida pool business” becomes a query. “Show me everyone who was at Thanksgiving 2012” becomes a query. The pipeline was validated against a memorial video archive of a family member who passed — a stress test I wish I hadn’t needed but am grateful the pipeline was ready for. It hit 89.4% recall on person identification across 40 years of footage. Next step is giving every person a face — pulling profile photos from the Apple Photos People database so the dashboard looks less like a spreadsheet and more like a yearbook.