Forge

Home lab LLM inference gateway

Cloud LLM bills were getting silly and I wanted everything personal to run on hardware I own. Local-first, cloud as fallback.

Every project I ship uses LLMs. Paying OpenAI, Anthropic, and Google per token for things I run all day, every day, got silly fast — and none of them were built to let me own the data flowing through them. So I inverted the default: local-first for everything personal, cloud as the fallback when the local side is busy or unavailable. Forge is the gateway that makes that inversion work.

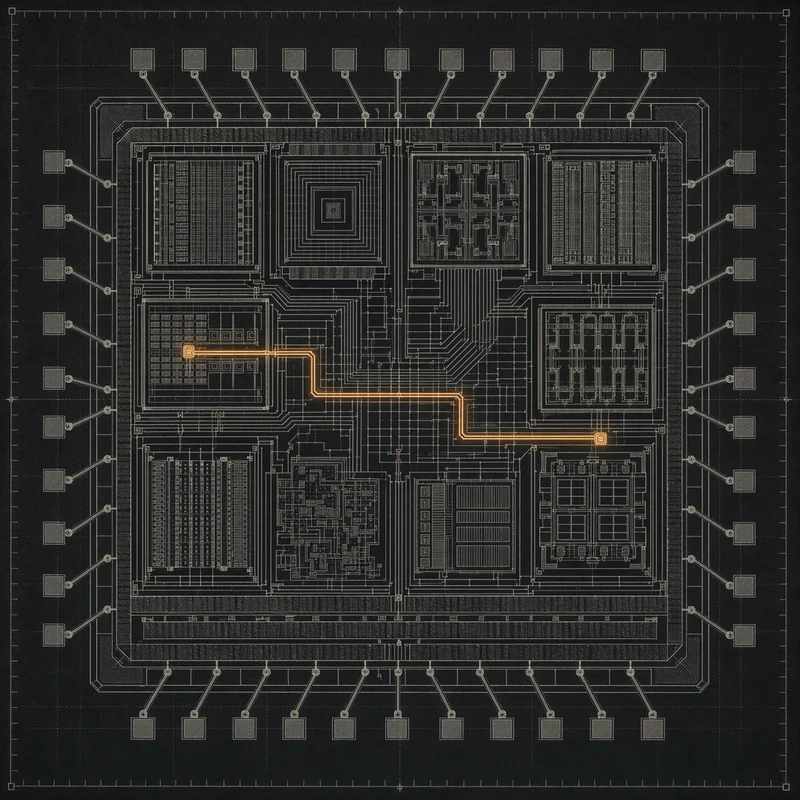

Twenty-nine models sit behind a single OpenAI-compatible endpoint — twenty-three local builds running across two AMD Strix Halo machines with 192 GB combined RAM, linked over Thunderbolt 5 at 40 Gbps and 0.12 ms of RTT, plus cloud pass-through to Claude Opus 4.7, Haiku, GPT-4o, Gemini 2.5 Pro, Flash Lite, and Grok 3. Qwen 3.6 35B-A3B is the canonical chat/code model; Qwen 3-VL 30B-A3B handles vision; specialized endpoints cover embeddings, rerank, Whisper transcription, InsightFace face recognition, Florence-2 OCR, and Chatterbox TTS. Llama-swap manages hot-swap between text models on the shared port, so the 287.7 GB VRAM footprint overstates steady-state residency — in practice only one or two text models are resident at any time. Request logging to SQLite, Prometheus scraped every fifteen seconds, Grafana dashboards, and a GitHub-OAuth-gated status page.

The quiet story is the stability work. AMD Vulkan on gfx1151 is not a finished product — --vae-tiling is mandatory because the allocator can’t grant a contiguous 10+ GB buffer for SDXL VAE decode, SDXL needs --clip-on-cpu even with 96 GB VRAM to dodge kernel-level radv/amdgpu OOMs, and Wan video has a hard ~2.7M voxel ceiling before the device buffer blows up. There’s a gpu-watchdog systemd timer that tails the forge-api journal every five minutes and auto-restarts on specific Vulkan corruption patterns. And there’s “Creative Mode” — a platform-wide toggle that stops the text services to free VRAM for FLUX and Wan generation, flips a flag in the Nexus DB, and tells ARIA’s LLM router to fall back to cloud until the session is over. Every lesson in the code is one I learned the hard way.