QLoRA

Personal language model fine-tuning

I want a model that writes like me, not like an assistant. Twenty years of my own messages and emails are the training set.

Every cloud LLM is calibrated to a generic helpful-assistant voice. “Certainly! Here’s a list of…” “Great question! Let me break this down for you.” Most of what I write in a day is not that. It’s short, it’s direct, it has typos, it has em-dashes everywhere, it has one-word replies and 3 AM rambles and running jokes that stretch across months of conversation. I want a model that can write that, so when ARIA drafts a message on my behalf it reads like me and not like a phone support script.

The architecture is style transfer, not end-to-end generation. A big model — Claude Opus, or a local 72B — writes the substance, and a small fine-tuned 3B model rewrites it in my voice before it ships. The small model doesn’t need to reason; it only needs to translate. Training data is 75,329 records pulled from twenty years of my own sent messages across eleven sources — iMessages, Google Chat from the WilmerHale era, Plaud transcripts, Gmail, Instagram DMs, Facebook, even a PST dump of old work email — roughly 3.1 million tokens in ShareGPT JSONL format. Extraction scripts walk the Nexus Postgres, strip HTML and quoted replies, filter automated emails and one-word reactions and tapback noise, and emit one system / human / gpt triplet per real Nic sentence.

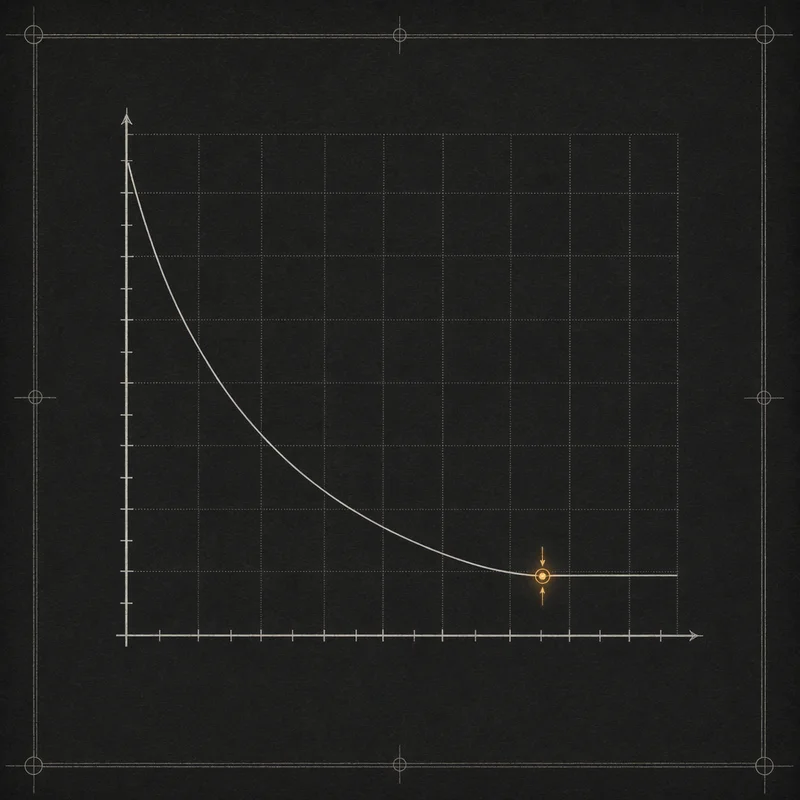

Picking the base model was three tries. Qwen 3.5-9B: the GatedDeltaNet hybrid attention blew activation memory and the forward pass OOM’d even on 96 GB of VRAM. Qwen 2.5-7B: trained cleanly but a full three-epoch run took forty-two hours, which is too slow to iterate LoRA hyperparameters against. Qwen 2.5-3B settles at around seventeen hours on a QLoRA config of r=16, alpha=32, targeting all seven projection matrices — a tradeoff I took willingly because the small model is a translator, not a thinker. The adapter plugs into Forge as a nic-voice endpoint that any downstream system can opt into on a per-request basis. The extraction is done, the training set is in place, the systemd unit is wired, and the scaffolding is a sudo systemctl start away from serving. This is still the project I keep saying I’ll finish next weekend.