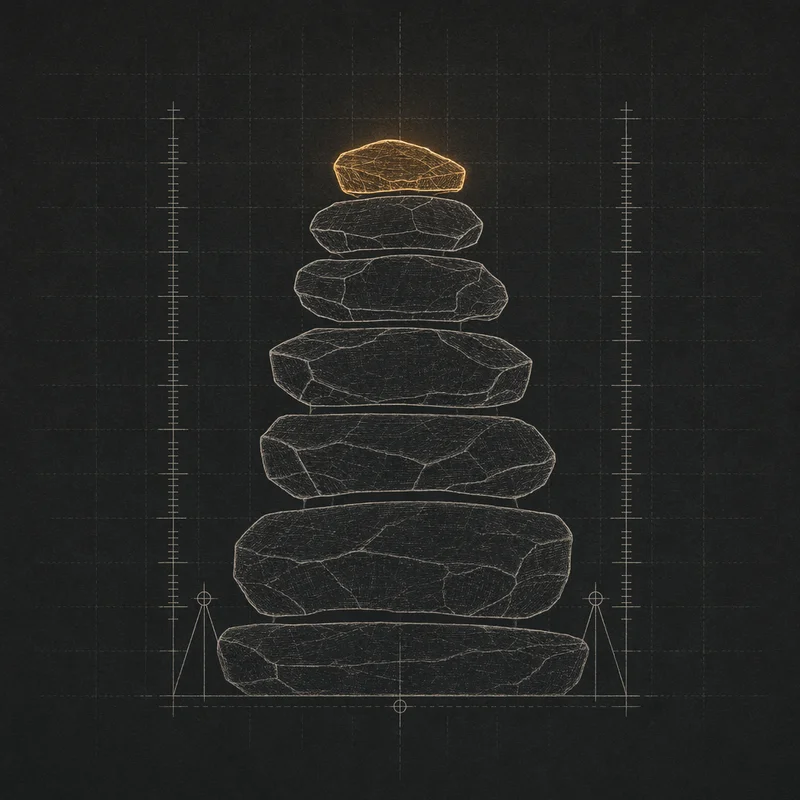

Cairn

Daily check-in capture surface for journaling, biography, and drafts

A record of where I've been, collected in small bites. Each question-and-response is a stone on a cairn — a trail marker. The downstream consumers are what turn the stack into a corpus.

A cairn is a stack of stones marking a trail. Each prompt-and-response in this app is one stone. Over time the stack becomes a record of where I’ve been — feedstock for daily journaling, for a multi-year biographical corpus, for Broadside’s first-person drafts, and for ARIA’s episodic memory. It started as a question — what does a personal LLM pipeline need that I can’t get from calendar entries and iMessages? The answer was specific, in-the-moment, first-person language captured at the moment it’s said, which is the exact thing retrieval systems uprank when they’re trying to write in my voice. Cairn is the collection surface. Not a journaling app. No timeline UI. No editing of past entries. Capture is cheap; review happens elsewhere.

Server-side, the backend is end-to-end operational. A handler called cairn-day-plan runs at 00:10 ET each night, reads cadence config from agent_config('_platform','cairn.schedule.*'), and pre-materializes one cairn-question-generate row in Nexus’ tempo_jobs table per ping for the next twenty-four hours. Six pings a day by default — two scheduled anchors (morning-intent at 07:00, evening-recap at 20:30) and four random picks drawn from three time windows. When each fire time arrives, a Forge call generates the actual question (Claude Haiku 4.5, about 800 ms) and an APNs push hits the phone. Responses land in a least-privilege nexus_ingest Postgres role on a per-source raw_ingest_cairn bronze table with a JSON-Schema-validated payload — the envelope is identical to the one every future ingest producer (Watch annotations, voice memos, music listens) will use. First real scheduled question, generated 2026-04-22 at 18:30 ET: “What’s one thing you’re looking forward to tomorrow?”

The client side is still the blocker. iOS scaffold committed, but Phase B (building the app against the scaffold) requires a Mac session I haven’t spent yet. Until then, the backend runs on an empty phone — questions generate, fires enqueue, pushes fail harmlessly against the one test device token. Three cadences were committed in the product spec — random, scheduled, and context-triggered (calendar ended, workout finished, geofence) — but context-triggered needs a general Nexus event-bus substrate that doesn’t exist yet, so v1 ships with just the first two. The silver-layer reconcile job, which closes the question-to-answer join and stratifies responses by trigger type, is next — once it lands, downstream consumers can start asking which trigger type produces the most voice-grade material.