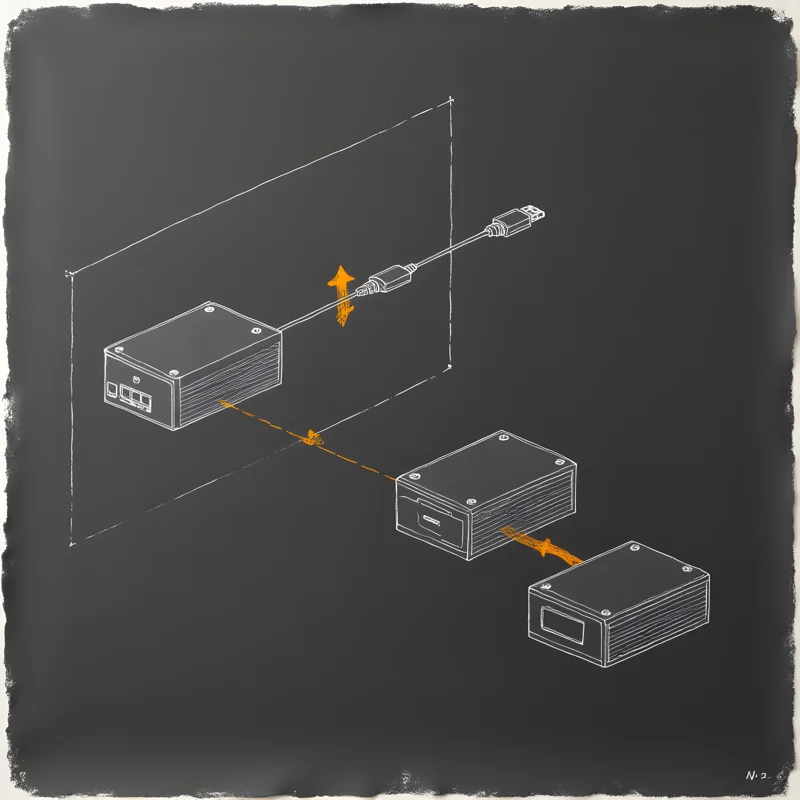

Crucible is the satellite. Same base hardware as Furnace — another GMKTec EVO-X2 with AMD Strix Halo — but half the memory (64 GB RAM, 64 GB VRAM) and a different job. It sits physically next to Furnace and connects over a direct Thunderbolt 5 cable: 40 Gbps, 0.12 ms round-trip, enough to make llama.cpp’s RPC tensor offload actually work. Llama 3.3 70B lives on Furnace, but roughly half its tensor layers live here, and the inference stream crosses the cable every token.

The second job is running specialized services that don’t fit on Furnace. crucible-router serves an abliterated Qwen3 30B-A3B MoE (~21 GB, 3B active parameters) on port 8090 — this is the router LLM Smithy uses to turn natural-language image prompts into structured generation plans. Abliterated means no refusal layer, clean JSON output, no moralizing, no “I can’t help with that” for image prompts that are merely edgy. It’s the right tool for a personal creative workbench that I own and am responsible for. crucible-postproc is a FastAPI worker on port 8770 that exposes CodeFormer face restoration and Real-ESRGAN 4× upscaling as HTTP endpoints, so Forge can call them without managing a second Python environment on Furnace.

Crucible is also where nexus-scaler runs — an autoscaler that watches the shared job queue on Furnace’s Postgres and spins up ephemeral bulk workers when there’s a backlog. Photo reprocessing, batch media fingerprinting, catch-up embeddings — the kind of work that doesn’t need to happen in real time but does need to happen eventually. The pattern is that Furnace is the authoritative, always-on node, and Crucible is the elastic workforce that scales up and down around it. They share a job queue, a database, a DNS namespace, and a Thunderbolt cable. They are less two machines and more one machine with two enclosures.